Deontology vs. Consequentialism

The Trolley Problem Solution

When evaluating public policy, and decision-making more generally, there are two big competing moral systems: Deontology and Consequentialism (or Utilitarianism). Both are of course concerned with “doing the right thing” or “not doing the wrong thing” but differ in how they define right and wrong. Today on Bradical Thinking, we’ll be going over these two systems, pros and cons, and why I’m a morally great and virtuous person.

Consequentialism

Consequentialism, as the name would suggest, is concerned with the consequences of any given action or social policy. When taking any action or enacting a policy, we should consider who is “better off”, who is “worse off”, and by how much to determine if it’s the right thing to do.

There’s a concept in economics called “utility” which basically just means the value or happiness that someone gets out of something. Generally speaking, we’re all out here making decisions trying to maximize our own utility. Or at the very least, perceived or expected utility (we will address this later). For instance, say you had like $100k to spend. You could either buy:

A Tesla Model S Plaid, with full self-driving capabilities

OR

Let’s say you want the chair more. You’d be happier with the chair than the Tesla. You think you’d get more utility out of the chair. Then you buy the chair.

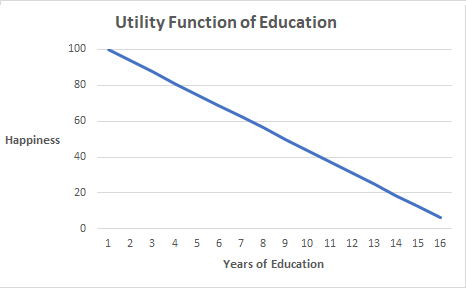

If you go to an economics classroom there’s a concept called a Utility Function, a curve on a graph where you track (and mathematically describe) how much utility someone gets out of a given good or service. Value is measured in a fictional unit called “utils”, and you solve all kinds problems involving what amount and what combination of goods and services will maximize someone’s utils for a given cost. It’s really stupid don’t go to college.

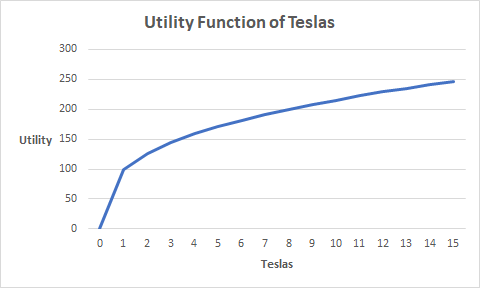

BUT stick with me because it will be a useful frame to evaluate Consequentialism through. We can imagine utility functions for Teslas and Club Armchairs as well. If I buy the Tesla I’ll get a certain amount of happiness (or “utils”), and if I buy two of them I’ll get a different amount of value. Same thing with the chair. Note that it’s not necessarily linear; I’m not literally exactly twice as happy having two Tesla’s than I am having just one. In fact, in that example I’m probably not getting much utility at all out of the second, third, fourth, etc, Tesla I buy since I can only drive one at a time. The utility function of Teslas for us might look like this:

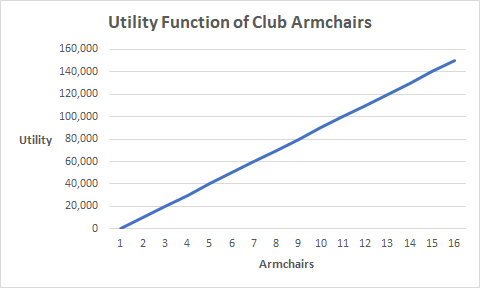

And so in this example you’d consider your utility function for Teslas, compare it with your utility function for Club Armchairs, consider what your position is right now and then make a decision based on whatever path you like better. For me I know what I’d do because my utility function for Club Armchairs looks like this:

So in this case, I believe I will get more utility purchasing that Club Armchair than another Tesla, and I make my decision accordingly.

Let’s Get This Utility

The thing is, you could say each of us has a “utility function” for literally every decision we ever make. One for buying Teslas, one for buying armchairs, one for saving your money and not buying anything. And you have a utility function that describes how happy you’d be going for a run, baking a pie, seeing the Madame Web movie (0 utility) and you make purchasing decisions and life decisions based on the fact that each one has some kind of cost (time, money, and/or effort) and which one is going to give you the most utility for that cost.

Now when it comes to the topic of the day, morality, we can go to English philosopher Jeremy Bentham. Bentham is often considered the founder of the Utilitarian school of thought. In his work An Introduction to the Principles of Morals and Legislation he states:1

Nature has placed mankind under the governance of two sovereign masters, pain and pleasure. It is for them alone to point out what we ought to do, as well as to determine what we shall do. On the one hand the standard of right and wrong, on the other the chain of causes and effects, are fastened to their throne. They govern us in all we do, in all we say, in all we think: every effort we can make to throw off our subjection, will serve but to demonstrate and confirm it. In words a man may pretend to abjure their empire: but in reality he will remain. subject to it all the while. The principle of utility recognizes this subjection, and assumes it for the foundation of that system, the object of which is to rear the fabric of felicity by the hands of reason and of law. Systems which attempt to question it, deal in sounds instead of sense, in caprice instead of reason, in darkness instead of light.

He goes on with regards to society and the community:

The interest of the community is one of the most general expressions that can occur in the phraseology of morals: no wonder that the meaning of it is often lost. When it has a meaning, it is this. The community is a fictitious body, composed of the individual persons who are considered as constituting as it were its members. The interest of the community then is, what is it?—the sum of the interests of the several members who compose it.

It is in vain to talk of the interest of the community, without understanding what is the interest of the individual. A thing is said to promote the interest, or to be for the interest, of an individual, when it tends to add to the sum total of his pleasures: or, what comes to the same thing, to diminish the sum total of his pains.

An action then may be said to be conformable to then principle of utility, or, for shortness sake, to utility, (meaning with respect to the community at large) when the tendency it has to augment the happiness of the community is greater than any it has to diminish it.

A measure of government (which is but a particular kind of action, performed by a particular person or persons) may be said to be conformable to or dictated by the principle of utility, when in like manner the tendency which it has to augment the happiness of the community is greater than any which it has to diminish it.

Alright that’s a bunch of tedious, scholarly, 18th century British gibberish that I know you skipped and didn’t actually read. So the modern Bradical Thinking summary is that:

Everyone has a utility function for everything they do and everything that happens to them

The right (or wrong) thing to do should be determined by how much the overall sum total utility of everyone involved is affected (increased or decreased) by that action

Everyone’s utility function for everything is different, because everyone’s at a different place in life and values things differently. If I give $200k to someone who’s destitute poor that could be the catalyst for them to completely turn their life around and bring them from total abject misery to a wonderful and stable level of fulfilling happiness. If I give that same $200k to Lebron James, he won’t even notice; His utility from that extra $200k is basically zero. If I want to maximize total utility here, I’ll give the money to the poor person.

We Live In A Society

When we start aggregating everyone’s utility function for everything and looking for the maximum of that omni-utility curve like Bentham suggested, it has implications on social policy decisions. For example, instead of just me having money to give out to either a poor person or Lebron James, I could also imagine taking money from Lebron James and giving it to that poor person. Of course if I just take $200k from Lebron James without his permission, you could say that’s wrong because it’s stealing. But you could also say he’s filthy rich and he and his family will still be living a life of comfort and luxury if we take it from him, whereas that poor person could use it to achieve some measure of stability. Lebron’s quality of life will not change in the slightest if we take $200k from him, whereas that poor person’s life might be completely turned around.

You could say we’re increasing total utility by taking some money from the rich and giving it to the poor. And if you’re a Consequentialist, maybe you’d say we should do that as a society.

You can apply this to all kinds of social policy decisions. Roughly speaking, Consequentialism is saying we ought to seek to do the most good for the most people. Maximize the Benthamite utility function of society.

Math Ruins Everything

Now obviously when we make a decision for just ourselves, or when something happens to us individually, you aren’t taking out a graphing calculator and punching in numbers and plotting utility curves; You just do stuff that you expect to make you most happy and all the graphs and theory I described above are just unnecessary mathematical abstractions.

However, if you want to use this as a guiding moral framework for wide social policy decisions that affect lots of people, we should caveat any analysis of utility functions with probability; The exact shape of that graph is not known with certainty in advance, only what you expect based on the knowledge you currently have. I might think that buying the armchair will make me more happy than buying the Tesla, but for all I know the chair fabric was made with a chemical that will give me cancer a year later. In which case, the actual utility function is not the fabulous upward line I posted above but a really badly downward sloping one. Most likely I didn’t know that in advance, so reeeaaally the utility function of buying that chair we should be looking at is something like the set of all possible utility functions, how likely it is that each one of them is the true utility function, and weighting and aggregating them all by their respective probabilities. After all there are small, but non-zero, chances that:

The chair fabric will give me cancer

My wife thinks the chair is so ugly she divorces me on the spot for buying it

Zendaya thinks the chair is so amazing she agrees to marry me on the spot for buying it

The chair is actually a rare antique owned by a royal family and I can resell it for 500x what I bought it for

The manufacturer hides a bomb in cushion

Now, I don’t know which one of these (if any) is the case, and I also don’t precisely know how likely any of them are. These are, of course, comical examples where the probability is basically zero. But the point is that there is uncertainty with regards to maximizing utility, and that uncertainty compounds when you try to maximimize utility for huge numbers of people, with decisions that affect huge numbers of things, over long periods of time. Sometimes, we’re just rolling the dice and hoping we did the “right” thing.

Deontology

Deontology comes from the Greek word deont relating to “that which is binding, duty”2. “Duty” is a good way to summarize this, because Deontology appeals to a sense of duty to absolute moral principles and natural laws about that which is good. Rather than look at the economic or utilitarian consequences of what happens, we ought to just establish fixed virtuous rules and abide by them. Or said another way: The ends do not necessarily justify the means.

For instance, let’s establish a moral rule like “Don’t steal”. If someone else owns something, you should not take it from them by force or fraud. Deontologists would not calculate the utility that might result from stealing, you just don’t do it. Even if stealing $200k from Lebron James and giving it to a poor person may result in an increase in total happiness of the system, we respect property rights. If we believe in property rights, then they are absolute. There is no excuse to violate them because you think someone else (or some other group) might be better off with that property. Maybe it’d be great if Lebron James donated money to help the poor voluntarily, but Deontological ethics would not allow for me to take it from him and redistribute it on my own volition.

This removes the burdensome calculation of tracking the utility function of society, but replaces it with the other problem of determining:

What are the absolute moral principles and natural laws

Where do they come from or how do we justify them

Bentham was profoundly against the idea of rights coming from God, or nature, or universal laws3 so we’re going to have to turn to Immanuel Kant to describe this in an archaic and old-timey European way. In Groundwork of the Metaphysics of Morals (1785) he writes:4

Act as though the maxim of your action were to become, through your will, a universal law of nature.

Act in such a way as to treat humanity, whether in your own person or in that of anyone else, always as an end and never merely as a means.

Act only so that your will could regard itself as giving universal law through its maxim.

Notice Kant does not refer to “creating good outcomes”, only that you should act good and have that be the end in it of itself. This is reminiscent of rules like “Treat others as you would wish to be treated” or if you want to sound extra pointy-headed about it: “Do unto others as you would have them do unto you”. Don’t steal, don’t murder, don’t physically harm people, don’t commit fraud, don’t violate someone’s life, liberty, or property. Just don’t do it, end of discussion.

However, this still ostensibly leaves us with the issue of “Why are theft/murder/assault/property crimes universally morally wrong?” Bentham would argue that the only universal objective of morality is to increase overall good. Some people might say that your right to be free of crimes like this comes from God, or natural law. But both of those are kind of just appeals to authority. “Of course stealing is wrong! The Bible says so!” would not convince to anyone who doesn’t accept The Bible as an authority, nor does saying “property rights and safety in your own person are the natural extension of enjoying the fruits of your own labor” really resolve it. Proving the moral legitimacy of natural rights is a whole other ballgame, which is why it’s easier to just tell kids “treat others as you would wish to be treated” rather than having them read John Locke.

Nothing Stops This Trolley

The classic problem that seeks to pit these two schools of thought against each other is The Trolley Problem. It goes something like this:

Suppose there is a train, barreling towards five people who are tied down to the tracks. You are some distance away, near a switch that you could flip to redirect the train and save them. However, this will redirect the train on another path, where a different person is tied down to the tracks. By flipping the switch, you will save the five people but kill the one other person. Should you do it?

This problem is so classic and silly in its premise it’s an internet meme at this point5. But if we try to take it seriously we can evaluate the options as follows:

Consequentialist - Flip the switch. Yes you’ll be dooming that other guy to his death, but you’ll save five others. The lives of five people are worth more than the life of one, we maximize total utility.

Deontological - Don’t flip the switch. Intentionally choosing to kill someone is wrong, even if you think it’ll save more lives in the future.

The vast majority of people would probably just say you should flip the switch to save five and kill the other guy. While I love a good fantastical hypothetical thought exercise as much as the next guy, the base version of The Trolley Problem is a bad way to compare these two moral systems or determine which one you favor. It masquerades as a simple “should you choose to kill one to save five” but it removes essential real world context in such a way that heavily biases it towards the Consequentialist answer.

It removes any trace of the aforementioned probabilty or future uncertainty:

It is 100% guaranteed the five people will die if you do nothing, and 100% certain they will all be saved if you flip the switch, and 100% clear there are no other options available

The operative decision in question (flipping a switch) is so trivial and frictionless that not doing something is essentially the same thing as doing something

If all I have to do is flip a switch to save the five people, then me not flipping the switch is not simply indifference; It really feels more like me intentionally killing those five people.

For instance: If I (an able-bodied healthy adult) was at my brother’s house, walked into the bathroom, and saw his two year old son drowning in the bathtub by himself, and I noticed this and I just did nothing at all to save him… I think most people would agree I’m at least a little guilty of some kind of crime here. Sure he’s not my kid, and sure it was probably his parents’ responsibility to not leave him alone in the bathtub in the first place not mine… but considering that it would cost me basically nothing at this point and put me in zero danger or liability whatsoever to save this innocent child, I think me refusing to do it would be an issue to most people.

In The Trolley Problem, sure it wasn’t me who tied those five people down to the tracks or sent the train towards them, but considering that all it takes is for me to flip a switch to save them then me not saving them is akin to me not saving my drowning nephew6

Thankfully The Trolley Problem is really a series of problems, and subsequent versions are more interesting and not as stacked towards Consequentialism. The basic “kill one to save five” formulation can also be reframed as:

Trolley heading down the tracks to kill five people, but this time the only way to save them is by pushing a large heavy guy (standing next to you) in front of the trolley to stop it.

By pushing a guy on to the tracks, we are making the intention to do harm more clear and also making the operative decision less simple and diassociated than flipping a switch

A riotous mob has five hostages and is threatening to kill them unless someone is executed for the perceived grievance they are angry about. Is it ethical for a judge to scapegoat and hang some innocent guy to save the five hostages?

Introduces uncertainty for the Consequentialist case (is that really guaranteed to save the hostages, will the justice system be compromised in the long run, etc) and further deepens the intentionality of the action (not just flipping a switch, not just pushing a guy, but actually falsely accusing, arresting, trying, and executing a guy)

Five people in the hospital need different organ transplants or else they die. Is it ethical to kidnap, kill, and organ harvest an innocent guy to save them?

Same issues as the above, but you might question the Consequentialist position now also by asking about the people involved

e.g. Are we killing Mr. Rogers to save five convicted felons?

Are we killing a 25 year old guy with a wife and three young children in order to save five retired 90-year old dudes who have no families?

Somehow these questions don’t really come to mind when it’s just people who are all inexplicably tied to train tracks with no way of being saved.

Kant Keep Up With Me

If it’s not already clear, I personally lean towards Deontology. I enjoy utility functions and mathematical abstractions as a way to frame the issue, but in reality I generally refuse the notion that any given social policy issue is so clear cut that it can simply be plugged into an equation and the long-run outcome determined in advance. Consequentialist-style maximization attempts become increasingly more ludicrous as you make decisions regarding larger populations, larger geographic areas, longer time spans, and more complex systems. Consequentialism relies on the hope than you can reliably model or predict such things, which I reject. I also reject the notion that even if we could do such a thing (and to be clear, I firmly do not believe we can) that this would make it ok to violate natural rights or levy moral obligations onto people they didn’t ask for.

Many people who advocate for social policy that serves the goals of Consequentialist ethics don’t really live in a way that demonstrates a commitment to that. If you live in the United States and are doing at all well for yourself financially, you are significantly better off than billions of people around the world. We could easily make a Consequentialist argument that you should make efforts to give up large percentages of your money to people living in destitute poverty. You should theoretically be donating so much money to the poor until the quality of life between you and the poor is actually about to reach equilibrium. Sure you might not be Lebron James rich, but comparatively you are still much richer than many many other people and I could easily make the argument that total utility would increase if you gave up substantial portions of your wealth.

But basically no one does this, and I wouldn’t suggest this is needed to be an ethical person. To me this is the uncertainty problem in assessing the omni-utility function. Even if you were inspired to donate your wealth to equilibrium with the poor, how do you ensure your charity is well spent? How do you know it goes to deserving people and is not stolen by corrupt bureaucrats? How do you know you couldn’t do more good for more people by investing it yourself?

I live in Manhattan and very few people I know (many of whom collect big paychecks from fancy consulting firms and banks) donate even miniscule amounts of cash to the homeless people on the street for this very reason. Even though I could easily appeal to total utility and say “They need it more than you do!” What if this person just spends it on drugs? What if this person is a criminal? You don’t know the utilitarian outcome for sure, so you just don’t do it.

Edge-Case Skulduggery

Most counter-arguments to Deontology or universal moral principles rely on absurd hypotheticals like The Trolley Problem. Or other outlier edge-cases such as:

My kid is dying and there’s only one doctor who has the cure and they refuse to sell it to me or work out any kind of deal. Is it ok for me to steal it even though stealing is wrong???

You fall out of a balcony, but you manage to catch a flag pole before falling to your death. You could save yourself by kicking in your downstairs neighbor’s window but he appears and informs you that you don’t have his consent to kick in his window, and in fact also do not have his consent to hold on to his flagpole. Should you let go and die because trespassing is wrong???

There’s an asteroid hurtling towards the planet that will kill everyone and there’s only one guy with the weapon to redirect it and he won’t do it cause he wants twelve trillion dollars right now… can we force him to do it now even though forcing people to do stuff is wrong???

If you spend even 5 minutes thinking about all the assumptions implicit in these bizarre examples it’s clear that none of them have ever happened ever in the history of humanity, and they’re all just as over-simplified and greased up as The Trolley Problem. Even taking that “Is it ethical to steal medicine to save my sick kid” example, we’re assuming:

The kid is definitely terminally ill

The cure will definitely work with no issue

This is the only treatment option

This one doctor adamantly refuses to accept any kind of reasonable arrangement to save a dying child

The cure is like some trivially stealable pill in an easily accessible cabinet. Rather than a treatment plan that would require kidnapping/enslaving people, or some highly controlled substance that would require armed robbery

Even if we just reduce it to “is it ethical to steal money pay for necessary medical treatments” it’s still possible to inject doubt. As a Consequentialist, would you agree to the government legalizing robbery for people who cannot afford a medical treatment? Or would you say something like “Oh no that would be a bad idea because in the real world criminals would just pretend to have medical problems to rob others, or it would lead to violence, or healthcare providers would jack up their prices knowing the customers can legally loot and plunder to pay for it.” Well, if we’re basing ethical decisions on real world consequences there’s no sense in resorting to fictional edge cases right?

I could argue silly edge cases against Consequentialism too. Suppose, experts predict (Minority Report style) that:

Enslaving 10% of the population, putting them on amphetamines, and having them troubleshoot AI programs and supply chain problems until they die would lead to like 100x wealth for the other 90%. Total utility would go up! Should we do it?

Based on genetics, brain scans, macroeconomic factors, and psychoanalysis of upbringing.. my two year old nephew will grow up to be a heinous horrible dictator who will murder millions of people and plunge the world into darkness and tyranny. Should we just drown little Billy in the bathtub so he doesn’t grow up to be super Hitler?7

The Means Justify The Ends

Not that this matters that much to me, because personally I have lots of faith that the action that is objectively, morally right in a Deotonlogical sense usually leads to the greatest good for the greatest number in the long run. It’d be outside the scope of this post to prove the whole case throughout history, but let’s just take one example like American Slavery. If you and I are arguing in 1850, I could say we should abolish slavery with both ethical frameworks:

Deontology - “We should abolish slavery because it’s morally wrong. It’s wrong to force someone else to work for you unpaid and under threat of violence. We all know enslaving people simply for being born the into the wrong group cannot be a universal moral law, so abolish it.”

Consequentialist - “We should abolish slavery because it’s economically inefficient. Central planning doesn’t maximize value. If we enslave an entire group of people based on nothing but superficial characteristics and force all of them to be house servants or farm workers their whole lives, society will no doubt lose some number of potential scientists, teachers, artists, architects, statesmen, entrepreneurs, military tacticians, etc. By forcing them all into menial labor forever they won’t be free to develop their individual talents and make their best and highest contributions to the economy.”

In this example, I do believe the Consequentialist position. I actually do believe that a system of legalized slavery harms the economy in the long run, because it stifles innovation. Slavery only benefits the small group of the population that were slave owners. And I believe the moral position aligns with the best consequences in many, many other examples.

But that’s not why I’m against slavery, and I don’t think that’s why most people are either. If you and I are arguing in 1850 and I give you the Deontological abolitionist pitch and you respond with “Ok but without slaves, who would pick the cotton?” I don’t believe you’ve actually made a valid counter here.

I might give you the Consequentialist position just because I think that’s the only thing you’ll listen to. I might even take a sip of my 1850’s barrel ale and say “Nah don’t worry because you see, in the future? We’re not gonna need slaves to pick the cotton. We’re going to have this great big machine with a comfy leather seat and automatically cooled cabin that picks the cotton for you! Yeah you’ll just sit there, push your foot down on this pedal, and then this constantly exploding set of metal pistons will push the machine across the field and automatically harvest the cotton without any effort! And it’s gonna run by burning the compressed organic matter from plants/animals from millions of years ago! It’s gonna do the days work of 100 slaves in like an hour! Oh and if it ever breaks? You’re just gonna take this glowing rectangle out of your pocket, touch it a few times, and someone will just come to your house personally to fix it for you or have a replacement part!” And in 1850 you’d probably say that’s a nonsense pipe dream, and my abstract philosophical finger-wagging has no place in the real world where cotton really has to picked… but this is exactly what happened after we abolished slavery and it’s orders of magnitude more efficient.

Maybe just do the right thing, and things will work out alright.

https://www.laits.utexas.edu/poltheory/bentham/ipml/ipml.c01.html

https://www.etymonline.com/word/deontology

Stating in Anarchical Fallacies (1795) “In proportion to the want of happiness resulting from the want of rights, a reason exists for wishing that there were such things as rights. But reasons for wishing there were such things as rights, are not rights;—a reason for wishing that a certain right were established, is not that right—want is not supply—hunger is not bread.

That which has no existence cannot be destroyed—that which cannot be destroyed cannot require anything to preserve it from destruction. Natural rights is simple nonsense: natural and imprescriptible rights, rhetorical nonsense,—nonsense upon stilts.”

https://www.earlymoderntexts.com/assets/pdfs/kant1785.pdf

https://knowyourmeme.com/memes/the-trolley-problem

Who is not real, I don’t have any nephews at time of writing this and should any future nephews of mine ever need me to rescue them from drowning in a bathtub I assure you I am up to the job

Again, not a real person. I don’t have any nephews at time of writing this and should any future nephews of mine ever need me to stop them from becoming super Hitler, I’ll figure out how to do it non-violently